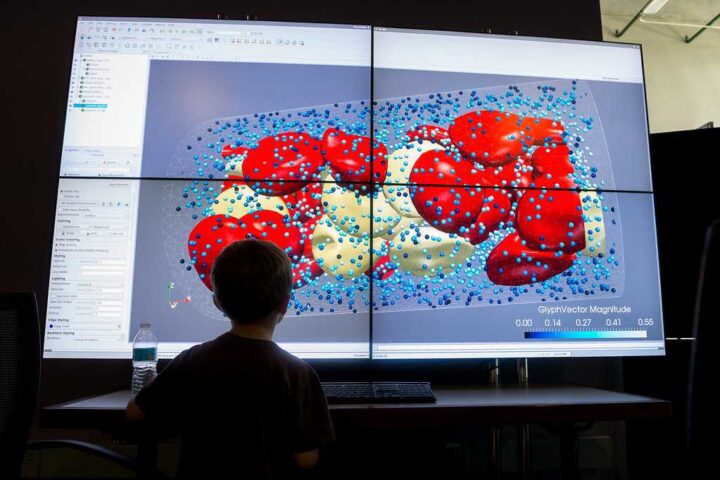

Machine-learning models designed to mimic human decision-making can sometimes make harsher judgments than humans regarding rule violations. MIT researchers have found that machine-learning models often fail to replicate human decisions about rule violations. AI Models trained with descriptive data tend to over-predict rule violations compared to human judgments. The use of descriptive data to train machine-learning models can have serious impacts on criminal justice systems and many other areas.

Artificial intelligence systems may make harsher judgments on rule violations based solely on rules, without human nuance. Normative data, labeled by humans who explicitly determine rule violations, is crucial for training machine-learning models. Descriptive data, which focuses on factual features, are often used to train models, leading to over-prediction of rule violations.

The study by MIT examined how AI models judge perceived breaches of a code using normative and descriptive data. Normative data involves knowing whether a post breaks a specific rule, while descriptive data focuses on factual features. Human participants were more likely to declare a code breach using the descriptive method compared to the normative one.

Inaccuracies in machine-learning models can have significant real-world implications, affecting decisions like bail amounts or criminal sentences. Greater transparency in data collection is necessary to address the flaws in training machine-learning models.

Professor Marzyeh Ghassemi emphasizes the significance of the study’s findings and the potential harm caused by biased models. The study highlights the need for using data that aligns with the intended context of the machine-learning task. Differentiating between descriptive and normative data is essential to reproduce human judgment accurately.

Transparent acknowledgment of data collection methods is necessary to ensure fairness in machine learning systems. Repurposing descriptive data for normative judgments can lead to models with extremely harsh moderations. Humans consider nuance and make distinctions that machine-learning models often fail to replicate.

Similar Posts

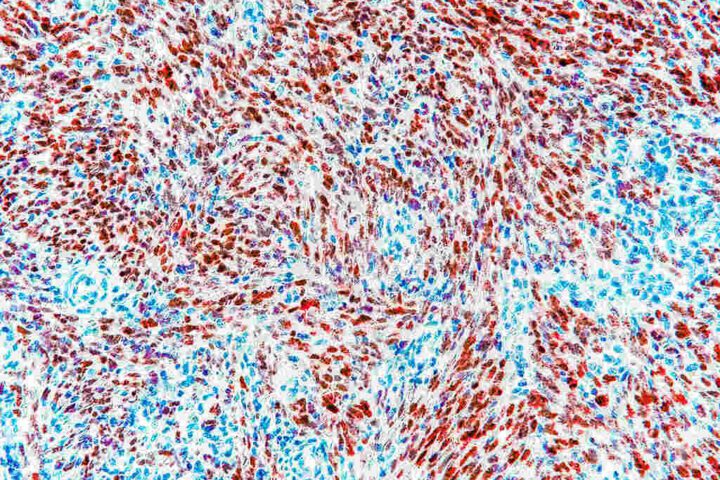

The study conducted by MIT involved the labeling of dog images to examine normative and descriptive responses. Normative labelers who knew the rules were more accurate in identifying policy violations compared to descriptive labelers. The disparity between descriptive and normative labels ranged from 8 to 20 percent across different datasets.

The accuracy of machine-learning models trained with descriptive data was lower, especially in cases of disagreement among human labelers. Dataset transparency and understanding data gathering methods are crucial to address the training flaws. Fine-tuning descriptively trained models using a small amount of normative data can help mitigate the problem.

Transfer learning and involving expert labelers like doctors or lawyers could provide further insights into label disparities. Transparent acknowledgment of data collection methods and careful selection of data for training are essential to ensure fair and accurate machine learning systems. The research received funding from various institutions, including the Schwartz Reisman Institute for Technology and Society, Microsoft Research, and the Canada Research Council Chain.